There are a hundred things unpleasant about being unwell. The foul taste of meds, visiting a doctor, shiny needles and hours spent in waiting rooms for tests. If it’s not a fleeting illness, the scanty gown, cold and rough bedding and the giant intimidating machine at the mercy of a temperamental technician are dreary even for the most cheerful among us. While it’s unlikely that we’ll be rid of flavourful medicines and big machines anytime soon, we could always hope for a cute pocket health assistant like Big Hero 6 to inflate out from science fiction and do our medical bidding. But researchers in collaboration with Intel have made some remarkable progress towards improving some of the key aspects of healthcare with machine learning. MRIs Here’s a prime example of an intimidating medical machine: Magnetic Resonance Imaging, or MRI uses large spinning magnets, radio waves and a cold hard tray that slowly inches you into a claustrophobic, narrow tube. [caption id=“attachment_5186181” align=“alignnone” width=“1280”] Currently, an MRI takes anywhere from 10 minutes to an hour to complete a scan. Flickr[/caption] CT Scans Another fairly common medical scan is a Computer (Axial) Tomography scan, also called a CT or CAT scan. It uses rotating X-ray machines and computers to produce a cross-sectional view of the body as a series of ‘slices’. [caption id=“attachment_5186211” align=“alignnone” width=“1280”]

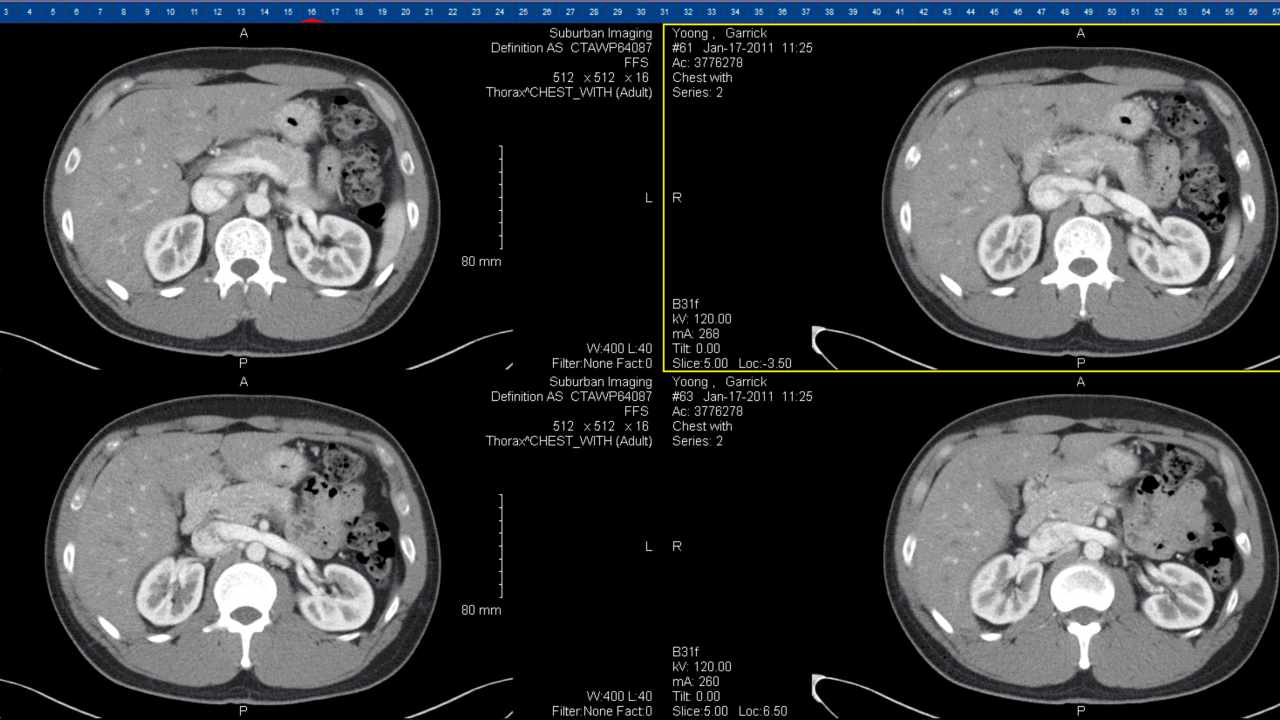

This image shows 4 slices of a chest CT scan. Currently, a CT scan takes anywhere between 30 to 45 minutes from start to finish. Flickr[/caption] Ultrasounds Widely known for its use in checkups during pregnancy, ultrasounds have a reputation of being both safe and painless. In a case study at the Zheijiang hospital in China, researchers found that roughly 80,000 radiologists spent most of the time looking at normal images. They reported that for a patient population of 1.3 billion, there weren’t enough radiology experts to satisfy the demand for diagnosis.

This image shows 4 slices of a chest CT scan. Currently, a CT scan takes anywhere between 30 to 45 minutes from start to finish. Flickr[/caption] Ultrasounds Widely known for its use in checkups during pregnancy, ultrasounds have a reputation of being both safe and painless. In a case study at the Zheijiang hospital in China, researchers found that roughly 80,000 radiologists spent most of the time looking at normal images. They reported that for a patient population of 1.3 billion, there weren’t enough radiology experts to satisfy the demand for diagnosis.

Medical imaging in India and large parts of the world is still carried out manually or in a semi-automated manner, Mark Burby, Intel’s Health and Life Science Director of Sales, explains. The ability of machines to process scanned images and audio quickly, leaving out the element of human error has been a key agenda in

Intel and Stanford’s shared research interests in Healthcare AI. Turning to machine learning for answers Burby says that the ability of machines to surpass human error has brought down the error rate of diagnosis and recognition of symptoms, be it images (an MRI or CT scan, for instance), or audio files (like an ECG). By breaking each of the scans down into patches, the memory required to process them by traditional computing and manual analysis becomes easier. But the likelihood of finding a potential tumour in the entire region scanned also comes down. Despite the challenges, researchers have fed AI software with large amounts of data, a little at a time, and trained it to process data from scans like MRI and X-rays and look for signs of abnormality. Using AI to diagnose scans Detecting tumours in MRI scans is one avenue where ‘

**inference learning** ’ of this kind has shown remarkable results, Burby said. Scans that once took hours to analyse can now be completed in minutes to confirm a cancer diagnosis. Researchers were also able to increase the accuracy of scans and reduce the time spent by busy doctors and technicians in looking at normal images. This also allowed 70 percent of the workload to be shifted to assistance and nurses, allowing doctors more time to attend to patients, according to their findings. An advanced version of deep learning technology, called

high-performing inferencing, developed jointly with GE, has made CT scans considerably quicker. Software that analyses these scans is now able to process them at speeds of 600 images per second on a good day, claims Intel. Paediatric MRIs MRI is a technique used by medical professionals world-over to diagnose serious conditions in young children. What makes this particularly tricky is the high degree of safety and low radiation damage it demands. [caption id=“attachment_5186401” align=“alignnone” width=“1280”] A CS MRI scan of the knee (on the right) shows more detail and resolution than a regular MRI on the left. Image courtesy: Radiology/Vasanawala et al[/caption] In the above image, a method called

compressed sensing (CS) has been used to process an image of the soft tissue surrounding the knee in a 5-year-old boy with pain. A CS-MRI has far less noise — and better resolution than a manual unprocessed scan — and removes the need for an anaesthetic. Clinical Trials TEVA pharmaceuticals in Jerusalem

teamed up with Intel to use a combination of sensors in wearable technology and mobile apps to monitor intake, updates and the progress of patients of Huntington’s Disease in clinical trials. Researchers used machine learning to process data from 90 patients over six months from different clinics and countries. It was possible for the data from the study’s subjects to be sent via cloud directly to researchers in a lab to process. Walking the line between risk and reward Machine learning has automated large, otherwise manual, steps in clinical trials. An MRI or CT scan could be far less tedious for patients in a matter of years if these advances make their way into primary health care systems in India. Early detection of tumours, better access to more patients and affordability are some other likely advantages. “Introducing meaningful technology can make a large impact on healthcare,” Burby says. “The challenge, of course, is that AI is still in a developmental phase, and certainly not perfect at this stage.” The advances in healthcare from using artificial intelligence, remarkable as they are, are still just a fraction of what’s to come.

)

)

)

)

)

)

)

)

)