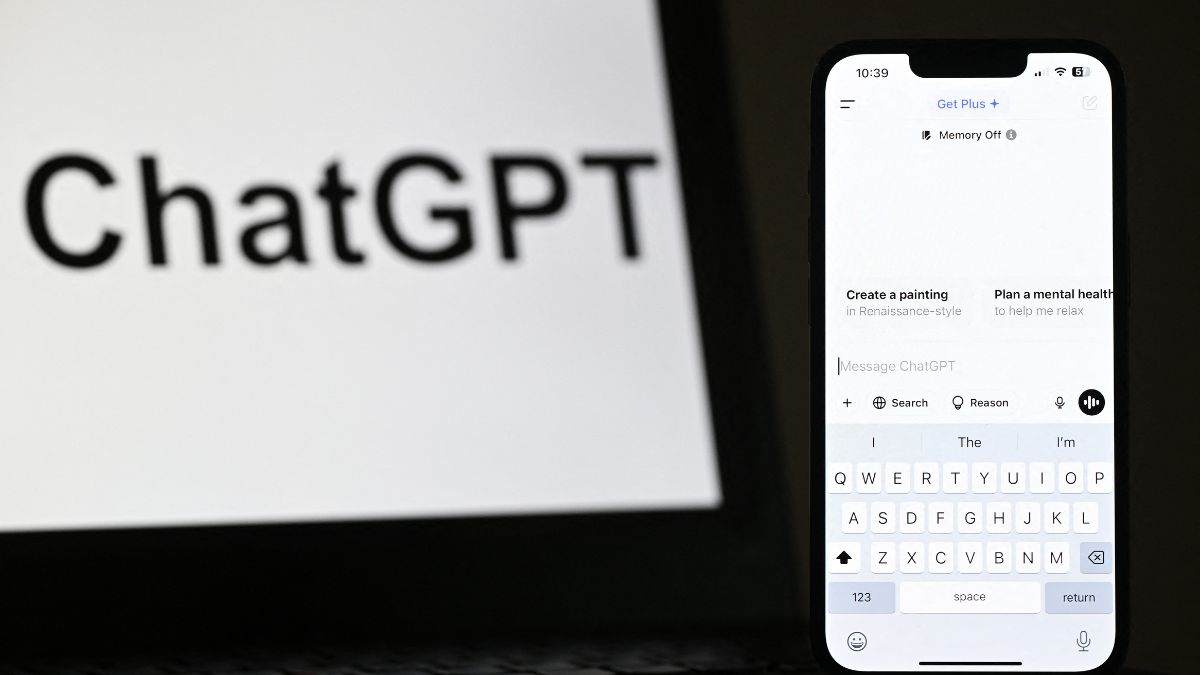

OpenAI is introducing a new age prediction system on ChatGPT designed to help determine whether an account likely belongs to a user under 18, allowing the company to tailor safeguards and provide a safer experience for teens.

At its core, the new system uses machine learning to make an informed guess about whether a user is likely a teen, applying extra protections automatically if so.

OpenAI introduces new age-related restrictions: How does it work

According to OpenAI, the age prediction model analyses a mix of behavioural and account-level signals. These include how long an account has existed, typical activity times, usage patterns, and any stated age.

The company emphasises that the model does not rely on sensitive personal data, instead learning from broad, anonymised trends.

We’re rolling out age prediction on ChatGPT to help determine when an account likely belongs to someone under 18, so we can apply the right experience and safeguards for teens.

— OpenAI (@OpenAI) January 20, 2026

Adults who are incorrectly placed in the teen experience can confirm their age in Settings > Account.…

If the model predicts that an account likely belongs to someone under 18, ChatGPT will automatically enable a stricter safety mode designed to reduce exposure to potentially harmful or sensitive content. That includes topics such as graphic violence, gory imagery, viral “challenges” that could encourage risky behaviour, or any form of sexual or violent role play.

Also off-limits are depictions of self-harm, content that glamorises unhealthy body standards or extreme dieting, and materials that could encourage body shaming.

The framework behind these restrictions draws on child development research and expert input, acknowledging that teens process risk, impulse control, and peer influence differently from adults.

Quick Reads

View AllOpenAI adds that when it’s uncertain about a user’s age, the system defaults to the safer experience rather than taking chances.

For teens already using ChatGPT, these guardrails aren’t entirely new, users who declare they’re under 18 at signup already receive an adapted experience with extra content filters. However, the new age prediction model helps extend those protections more broadly and consistently.

Parents will also be able to customise their teen’s ChatGPT experience through parental controls, including setting “quiet hours” when ChatGPT can’t be accessed, limiting features such as memory and model training, and receiving alerts if the system detects signs of acute distress.

Rolling out timeline

OpenAI says the rollout of age prediction is already underway for ChatGPT users on consumer plans. The company will monitor its performance closely, using the data to refine accuracy over time.

In the European Union, the feature will arrive in the coming weeks to comply with regional privacy and safety requirements.

)

)

)

)

)

)

)

)

)