The CEO of Nvidia Jensen Huang informed that the next generation chips will be more vigilant and would serve in full production and deliver five times the artificial Intelligence computing of the company’s previous chips when serving up chatbots and other AI apps

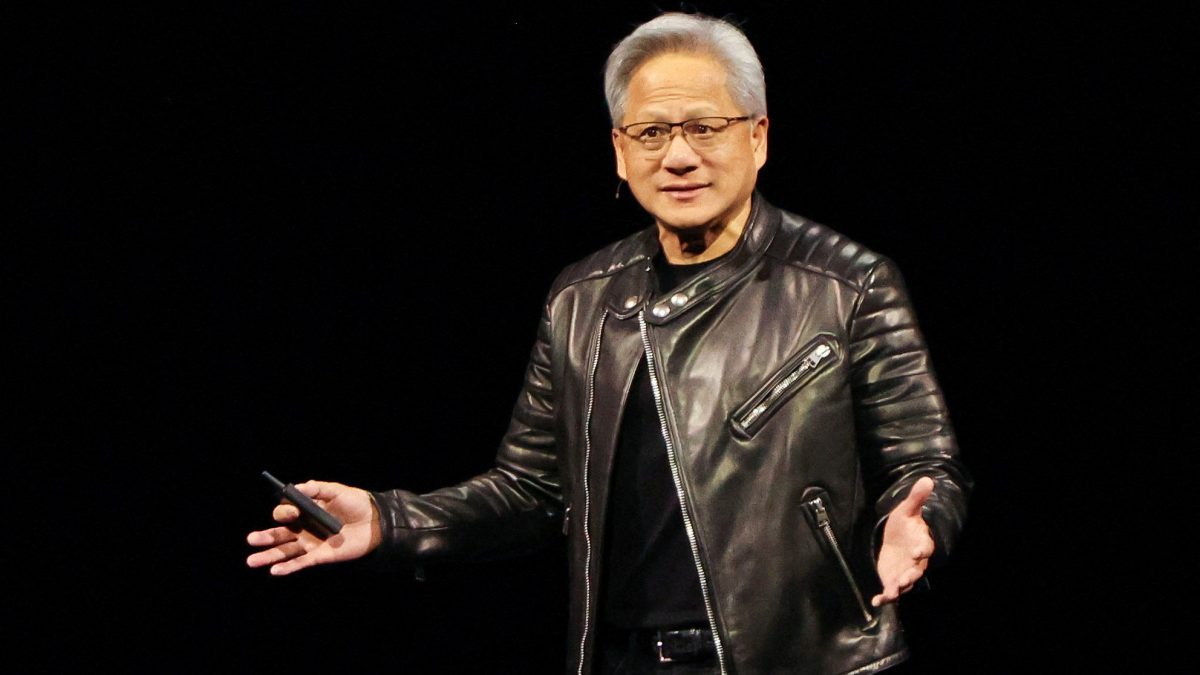

Nvidia is unveiling the AI chips which were estimated much later, but is coming much earlier than expected. In a speech at the Consumer Electronics Show in Las Vegas, the leader of the world’s most valuable company revealed new details about its chips, which will arrive later this year.

Vera-Rubin platform

The Vera-Rubin platform which is the next generation AI computing architecture designed for agentic AI, reasoning, and massive long-context workflows is expected to debut this year. The flagship server contains 72 company’s graphic units and 36 of its central processors.

Huang showed how they can be strung together into “pods” with more than 1,000 Rubin chips and said they could improve the efficiency of generating what are known as “tokens” – the fundamental unit of AI systems – by 10 times.

Huang also highlighted that Rubin chips use a proprietary kind of data that the company hopes the wider industry will adopt.

Deliver a gigantic step up in performance

“This is how we were able to deliver such a gigantic step up in performance, even though we only have 1.6 times the number of transistors,” Huang said.

Nvidia has been dominating the AI market, alongside this it faces much more rivals and competition such as Advanced Micro Devices (AMD.O), Alphabet’s (GOOGL.O) in delivering millions of users chatbot services and other technologies.

Huang’s speech also focused on how the new chips will work for the prompt and task provided, including adding a new layer of storage technology called “context memory storage” aimed at helping chatbots provide snappier responses to long questions and conversations.

Quick Reads

View AllSelf-driving cars

In other announcements, Huang highlighted new software that can help self-driving cars make decisions about which path to take - and leave a paper trail for engineers to use afterward. Nvidia showed research about software, called Alpamayo, late last year, with Huang saying on Monday it would be released more widely, along with the data used to train it so that automakers can make evaluations.

“Not only do we open-source the models, we also open-source the data that we use to train those models, because only in that way can you truly trust how the models came to be,” Huang said from a stage in Las Vegas.

Confident in approach

Huang has been confident enough about the services and deals that they provide without affecting other technologies instead focusing on its own business so that the result could expand and new products come in lineup.

At the same time, Nvidia is eager to show that its latest products can outperform older chips like the H200, which U.S. President Donald Trump has allowed to flow to China. Reuters has reported that the chip, which was the predecessor to Nvidia’s current “Blackwell” chip, is in high demand in China, which has alarmed China hawks across the U.S. political spectrum.

)

)

)

)

)

)

)

)

)